Tomorrow Oculus Rift is taking consumer pre-orders for its heralded VR headset, and many people are wondering whether 2016 will be the year of virtual reality … just as they wondered in 2015, and 2014 … as they wondered in 2009 when I left Second Life. At that time, I remained certain that virtual reality was the future of online interaction, but that it would be at least 10 years before the field could achieve mass consumer success. Virtual reality actually has many decades of history as a technical project, as well as a rich history in fiction that demonstrates the enduring attraction.

People keep mistaking “THE year” for virtual reality because they fail to properly assess the progress in each field required to make a truly compelling VR experience. Observers see great progress in just one field, and they assume that it’s enough to break open mass consumer interest. But in fact there are SEVEN fields required for VR success – “the year of virtual reality” won’t happen until every one of these fields has progressed past the minimum development threshold. Here’s a brief rundown of each field, WHAT it is and WHY it’s important, and WHEN it’ll be ready for a truly compelling VR experience.

1. GRAPHICS COMPUTING POWER

WHAT: The most obvious requirement is that a computer needs to be powerful enough to make a compelling simulation of reality. Now, what’s “compelling” is open to argument, and I would argue that some relatively primitive figures can comprise a compelling environment if they move, interact with each other, and react to you in an engaging way.

WHY: I guess you could have virtual reality without computers, just as you can have it without compelling graphics. I mean, that’s called “storytelling” and it’s pretty cool. But that’s not what we’re talking about here. Some minimum level of simulated graphics is required.

WHEN: Sufficient power exists now, and has existed for at least seven years. But if your requirement for visual fidelity is very high, then you might think that even today’s computers aren’t powerful enough.

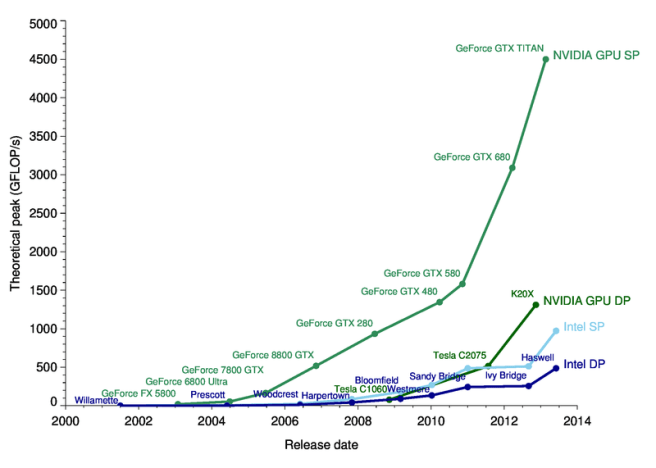

The technical measurement discussion isn’t too interesting, so please skip this paragraph and the graph below if you’re not inclined to pick over this kind of detail. There’s no single measure of computing power, but as a rough analogue I’d pick FLOPS and say that to simplify further we should talk only about GPU FLOPS, noting that there’s CPU-equivalent performance. Because I believe that an experience comprised of rough primitives can be compelling, I’d say that even one GPU GFLOPS is sufficient to support a compelling experience, and we’ve had that in home computers since 1999. But giving room for argument, I can raise the requirement to 500 times that, and still we’re talking about 2007-8 as the time when consumer-level computers had enough power to make virtual reality.

2. PERSONAL COMPUTING DEVICES

WHAT: Unlike the first field, this is less about predicting the power that computers have than it is about predicting what type of computer people will use. “Personal computing” used to mean desktop computers, but now people actually carry computers on their persons. Today, the type of computer that is most commonly in use is the mobile smartphone.

WHY: Philip Rosedale frequently said that when he started SL, he underestimated the time that it would take to get to mass market use of virtual reality, because he was only looking at the increasing power of desktop computers. He didn’t predict the shift to laptops, which happened in the early 2000s. Using smaller computers generally means using less powerful computers, so although desktop computing power was sufficient to simulate reality by the mid-2000s, the computers that people actually used were laptops, which were not powerful enough. Today, the computer that most people use is a mobile phone, which is even less powerful.

WHEN: Using the same standards above, smartphones will be able to simulate a compelling VR experience in 2017.

(Boring, skippable paragraph follows.) As above, this assumes the requirement is 500 GPU GFLOPS, without arguing too much about what that number really means. A high-end smartphone today can do about 180 GPU GFLOPS, with more power coming soon. (For comparison, a PS4 game console can do over 1800 GPU GFLOPS.) Taking Moore’s Law narrowly and literally, it will be 2017 before smartphones will get over 500 GFLOPS.

But should we even be talking about smartphones here? Forget about “PC” meaning desktop – the truly personal computer has moved from your desk to your lap to your pocket. Where is it going next? On your wrist, on your face? This is a question about the intersection of device use and power, not either one alone. The precise question is, “When is the type of computer that most people use every day going to be capable of 500 GFLOPS?” I still think this is a question about smartphones, but who knows?

3. VISUAL DISPLAY

WHAT: A computer just simulates the environment, you need to be able to see what the computer is simulating. For many years, the way most people see a computer’s output has been through a monitor. Now, Oculus Rift and other goggles are coming into the mass consumer market, and these are so good that they’ve ushered in the current wave of excitement about VR.

WHY: Sight is the most important sense in giving people a feeling that they are somewhere other than where they’re sitting. It’s not the only required sense, but without seeing a virtual environment, most people cannot begin to immerse themselves in the experience. I used to think that a flat monitor of sufficient size and resolution could provide a compelling enough VR experience, but using the most advanced VR goggles today simply blows away the monitor experience.

WHEN: The major unsolved problem with VR goggles is that using them for too long induces nausea. Although Oculus and others have made a lot of progress on this, it’s only to the point where nausea is delayed rather than eliminated. A product that makes you puke is never going to be mass market. Based on nothing more than a rough guess based on many years of observation of consumer hardware cycles, I’m going to say that it will take three years to sufficiently refine VR goggles to smooth away the nausea and other early problems, so it will be late 2018 before this field is really ready for mass consumption.

4. AUDIO FIDELITY

WHAT: Properly spatial audio means that sound should be directional, you should be able to hear where a sound is coming from, and more than just direction, you should be able to distinguish sounds from each other even when they are coming from the same direction or obscured by ambient sounds. This latter goal is called the “cocktail party problem” – even in a noisy cocktail party, you can focus on and hear a single speaker who isn’t necessarily louder than the party noise.

WHY: Seeing may be believing, but hearing is confirmation. The audio experience is often overlooked and undervalued, but the sound of being in a space is crucial confirmation that your brain can believe what you see. It’s possible that the nausea of the VR goggles experience is due to insufficient confirmation from other senses, and hearing is probably the most important and easiest sense to add to the virtual environment, though some might advocate for smell or taste (uh, yuck).

WHEN: “3D audio” has been around for many years, but the cocktail party problem remains unsolved despite recent advances. Still, the current state of the art in spatial audio is very good, and probably good enough without fully solving the cocktail party problem. I think we’ll see really excellent integration of audio fidelity with VR goggles even before the VR goggles are fully nausea-free, so let’s say that the audio component will be ready by 2017.

5. 3D INPUT PERIPHERALS

WHAT: This is the most important area that not enough people are talking about. Virtual reality requires a host of new technologies for allowing a whole body to interact in a 3D space: hand and finger movement, body position, eye-tracking, multidirectional treadmills. Every single one of these is a new Oculus-size opportunity in the making.

WHY: A keyboard and mouse or trackpad are not designed, and not sufficiently adaptable, for a person to move easily in three-dimensional computed environment. The only innovation in input in mass computing devices that we’ve seen in the last 20 years has been multitouch on a smartphone or tablet, and that doesn’t help much for 3D.

WHEN: We have yet to see a breakthrough product here, despite many promising efforts. The field is extremely varied and diverse, and it could take many years to sort out the winners. Somewhat arbitrarily, I guess it will be at least 2019 before we have mass consumer products that enable all of the tactile, visual and auditory input needed for compelling VR.

6. BANDWIDTH

WHAT: Though it’s easy to imagine a VR experience that is entirely created by a single computer at a single place (somewhat like watching a movie at a theater), it is much more likely that many computers will need to talk with each other over distance, and that requires access bandwidth to communicate (like the Web).

WHY: The design of the particular VR experience that defines success really comes into play here. For example, this post assumes “mass market VR” will be enabled by personal computing devices and that multiple people can share a VR experience from different locations. That means that larger computers will perform important tasks and coordinate communication with the smaller computers that people have. The amount of bandwidth required can vary greatly depending on what demands the system is making on the computers involved.

WHEN: If you think that VR is going to get to mass market through smartphones or whatever successor computer that we carry around with us, then you’re bottlenecked by the state of wireless cell networks. Although high-speed data connection is broadly available in major metropolitan areas, it is unreliable even there and unavailable outside of the most densely populated areas. Given the slow rate of evolution of cell networks, it would be at least 2022 before bandwidth is sufficient for VR everywhere.

Many VR enthusiasts picture mass-market adoption through desktop computers, gaming consoles, or other specialized hardware yet to penetrate mass market, but all of which would use wired connections up until the wireless access point, so for that camp, we could say that bandwidth is already sufficient.

7. LATENCY DESIGN

WHAT: The delay in computers communicating with each other is sometimes related to bandwidth, but this field is included as a separate factor to encompass other network quality issues as well as the sheer physics of data traveling across large terrestrial distances.

WHY: Some amount of perceptible latency is unavoidable as a matter of physics if we are talking about communication across the world. So to the extent that the VR experience relies on real-time interaction with a global population, acceptable latency must be designed into the experience, mediated somehow to make the perception of latency acceptable.

WHEN: Arguably, this is a problem that is solved now for many types of VR experiences, but I include it here just because I’ve seen many VR proposals that don’t consider how latency must be designed into the experience. We’ll see some common design patterns in the first year of release of the current crop, and they’ll formalize into best practices by next year, so we’ll call this solved by 2017.

So, when will “The Year of VR” really be? My rough guess is 2019 at earliest, 2022 for the more ambitious visions of mass-market VR that include mobile computing.

My point isn’t to be right on the prediction though; here I just wanted to give a more rigorous framework for making predictions of mass market success. When people claim that this or any year will be the year of VR, they should be clear on what the state of progress needs to be on each of these seven pieces. Considering progress in just one field alone has led to many, many mistakenly optimistic predictions.

One thought on “magnificent seven”