For over 20 years, Internet businesses have grown under the protection of a special law that provides extraordinary privileges. This law has properly been hailed as a boon to innovation, and has become enshrined in some quarters as an indispensable pillar of free speech. However, no law regarding technology can survive the merciless rule of unintended consequences; what was once a necessary sanctuary has become a virtual menace to society. If you wonder how the United States has reached the brink of electing a deplorable villain as its leader, at least part of the answer rests with the Internet’s most generous law.

This law is Section 230 of the Communications Decency Act of 1996. The bulk of the act was a misguided attempt to regulate “indecent” content on the Internet, most of which was rightfully struck down by the Supreme Court in the name of the First Amendment. But Section 230 was a special provision inserted late in the legislative process, out of concern that nascent Internet businesses would drown in legal liability for statements made by others. Section 230 states:

No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.

This is a shield from libel and defamation suits, an amazing advantage in the rise of the new media of the Internet. The impetus for this law came from a 1995 case where an Internet service provider was found liable for defamatory statements made by a user of its message board. The court’s reasoning included the fact that the Internet service had exercised some editorial control over some of the message board content; therefore the service could be treated as the publisher of all the content, just as a newspaper would be.

In 1995, this was a horrific decision made by technology-illiterate judges who had no understanding of the power and potential of the Internet. It would be nice to think that the Congressmen who inserted Section 230 into the CDA were blessed with extraordinary foresight into the future of technology. But no – actually they just wanted to be sure that Internet companies would be willing to help hide boobies.

Remember, the bulk of the CDA was an insane Sisyphean effort to stop the spread of pornography on the Internet. Internet providers were rightly concerned that they would never be able to stop all the boobies. They argued that the 1995 case showed that any failed attempt to censor boobies would be interpreted as editorial control, holding them liable for all the boobies that did get through. So these Congressman inserted Section 230 as a way of saying to companies “Hey, just try your best to censor boobies, you won’t be held liable as a publisher of the boobies that did get through.” Internet companies, even in 1995, were smarter than Congressmen. Although the CDA was about as effective at reducing pornography on the Internet as a cocktail umbrella in a hailstorm, Section 230 emerged from this fragile legislation as an enduring and invaluable shield against liability. Now you can’t sue Facebook for publishing information that is verifiably false and harmful. Lives can be destroyed on the sites we live on, and those sites will never be held responsible.

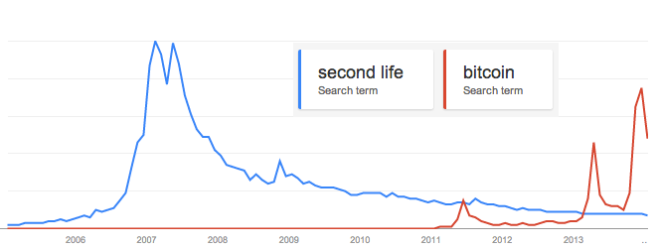

The EFF says Section 230 is “one of the most valuable tools for protecting freedom of expression and innovation on the Internet” and ACLU says that this law “defines Internet culture as we know it.” These eminent bastions of free speech have been tremendous warriors for a lot of good in our society, but like anyone else, they could not predict the future and they may cling too long to brittle ideas that are past their expiration date. When Section 230 was adopted, the Internet was the Wild West, the new American frontier for development. There were no dominant Internet companies. The law was written with Prodigy and CompuServe in mind; AOL was the up-and-comer, Yahoo was barely a year old. The media lifeblood of the nation were the three broadcast networks, the New York Times and the Washington Post, and the many local newspapers throughout the country. People who understood the Internet then were rightly concerned about legal liability crushing the industry in its infancy.

We live in a very different world today. Network effects make some large portions of the Internet into a winner-take-all game where the behemoths can quickly grow into billion-dollar enterprises, affecting billions of lives daily. Traditional media is dead and dying, a boon to experimentation and diversity, but a blow to authority and truth. Technologists were proud to disintermediate and destroy the old gatekeepers, but we engaged in this merry destruction without any thought to the vital purpose that the Fourth Estate served in our politics. And now we live in a nation where most days it seems like the only people who don’t believe the next president could be a racist, misogynist, fascist despot are the ones who believe she could be an acceptably corrupt continuation of a broken political system.

The gatekeepers are dead and most people only get their news from their friends and others in the same echo chamber on Facebook. Our public discourse is conducted on Twitter, where online harassment by anonymous, cowardly sexists and racists is treated as an acceptable form of free speech. And we are still, as we always are in technology, only at the beginning of our problems. I don’t know where this is going any better than lawmakers did in 1996; I don’t have a solution – but I do think we should take the thumb off the scales that favor Internet businesses.

A similar situation occurred with respect to state sales taxes. In a 1992 case, the Supreme court ruled that businesses with no physical presence in a state did not have to collect sales tax in that state. Amazon exploited this ruling, carefully building its business to avoid having to impose state sales taxes, giving it an advantage over local businesses. By 2012, Amazon saw the writing on the wall, and began “voluntarily” collecting sales tax in many more states than it had previously done. But by that time, the West had been won: Amazon was the dominant online retailer, and Main Street businesses had been all but destroyed. Amazon had the foresight to act ahead of the change in the laws, which is coming anyway. I fear our dominant Internet services lack the moral courage to act in the interests of our country.

Facebook and Twitter are our new public square, and although they are private businesses they should not be exempt from the laws and social requirements of other businesses that regularly gather large groups of people together. No shopping mall, for example, would allow the public posting of verifiably untrue, insane ramblings, not without damage to their business as well as legal liability. No sporting venue would allow its women to be spit on, its minorities to be subject to vile racist invective, without losing business and facing lawsuits. And yet we allow our most significant public gatherings online to be completely free of the obligations of being a publisher, obligations that supported the kind of media that have been vital to our proper functioning politics.

The internet destroyed vast portions of traditional media that depended on fact, truth and integrity. This hasn’t been solely a triumph of progress and free market principles, it has been a creative destruction assisted by a sweetheart deal with the government. Under this mantle of government protection, technology companies replaced essential elements of democracy with endless misinformation, lies and insanity. Free speech should allow much of this to be possible, but those who would build a business on irresponsible dissemination of speech should be subject to the same laws as the businesses that they destroyed. It’s time to take the training wheels off of Internet culture. Section 230 of the CDA should be repealed.